Located in Pittsburgh, PA

Case Study

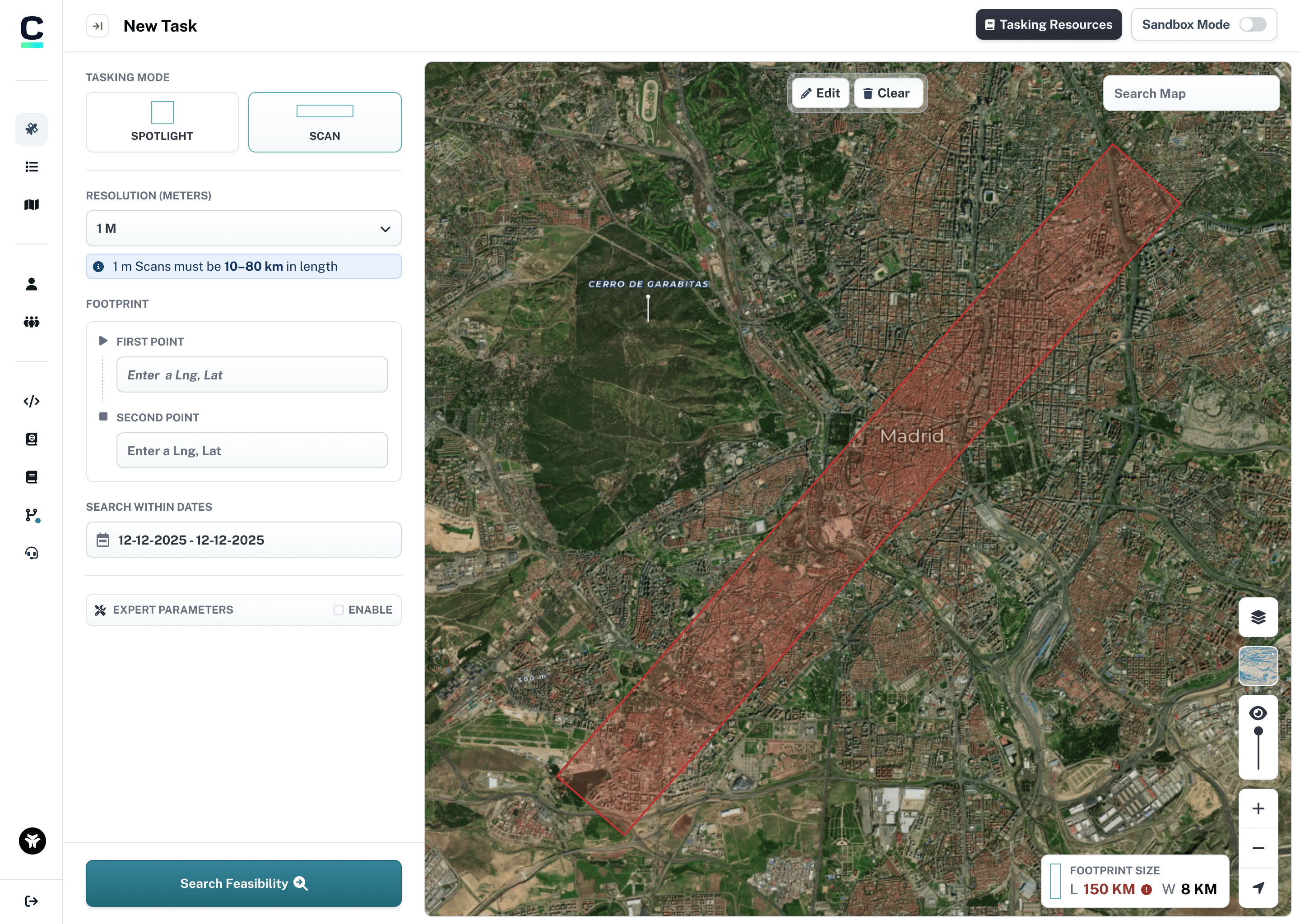

Canopy Scan Mode

A new way to capture images of earth.

Overview

Umbra Space, a leading provider of space imaging, set out to make satellite data truly accessible and on‑demand. Canopy was created to let users get imagery without the antiquated hurdles of phone calls and spreadsheet orders.

Users

U.S. government agencies, sovereign nations, image resellers, analytics companies, journalists, and scientific researchers.

Problem

Users struggled to order from the platform and use technical parameters. The app needed a polished, consistent design and the MVP missed key features—like ordering at scale. Customers leaned on our support team due to shortcomings.

Goals

Steer Canopy from an MVP to a fully-featured product. Introduce new functionality and offerings to help serve customer’s missions. Improve usability and iterate on the design to make the app a joy to use.

Company

Umbra Space

Roles

End to end UX research and design. UX Engineering and prototyping

Team

Lead product designer (self), Product manager, software engineering, flight software lead.

Timeline

2025 Q1 – Q3

Impact

100%

Fully automated ordering

Scan tasks can now be placed end-to-end without any manual intervention from the Umbra team

⭐⭐⭐⭐⭐

High customer satisfaction

Praised by customers for ease of use and a modern, intuitive interface

✓

Contract requirements met

Delivered on key contractual milestones, unlocking continued customer engagement

Define and ideate

Through competitor analysis and stakeholder interviews across the satellite imagery industry, I discovered no existing UI pattern for this type of interaction—making this a first-of-its-kind design challenge. Without direct competitors to reference, I looked to adjacent use cases:

- Polygon drawing tools in mapping applications

- Property boundary tools on real estate platforms

- Linear measurement tools in GIS software

The core insight: users needed a simple start-to-end point drawing interaction that felt natural on a map interface while providing the precision required for satellite tasking.

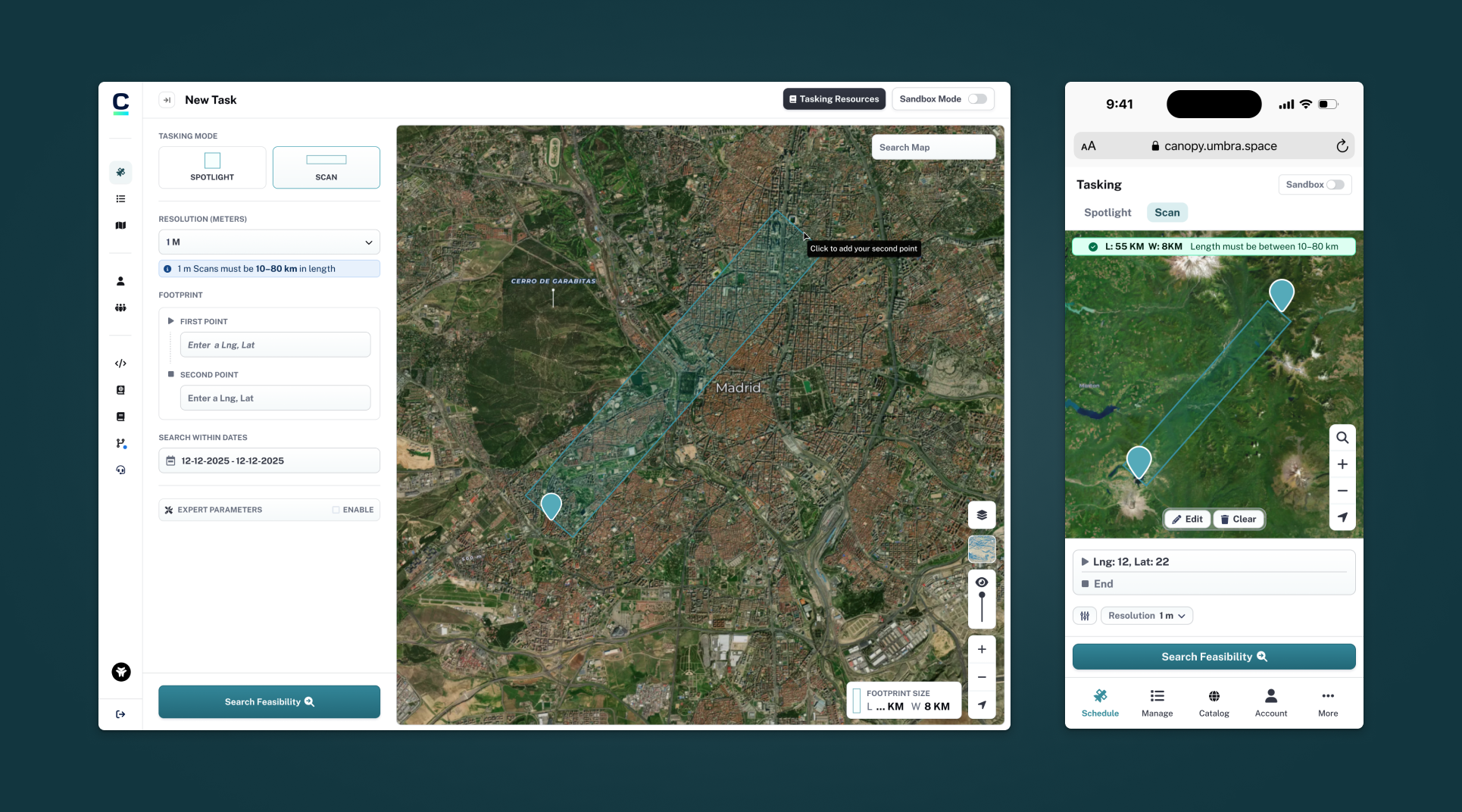

Early directions & iterations

From static concepts to interactive prototypes — I began with Figma explorations but quickly moved to a coded prototype because the feature's interactive nature demanded real interactions. I soon found that default Mapbox draw controls wouldn't cut it, so I was able to leveage Claude AI to assist in building a custom implementation. This allowed me to:

- Test real map interactions and physics

- Iterate rapidly on gesture and feedback patterns

- Conduct meaningful internal user testing despite NDA constraints

Deliverables

Through iterative testing with internal stakeholders, I landed on a direct path drawing solution: click a start point, drag to set direction and length, then click to confirm the end point.

- Matched how users conceptualized the scan

- Aligned with API data structure (start/end coordinates + width)

- Provided immediate visual feedback

- Supported any orientation naturally

I was able to pivot back to static designs in Figma to tie together the entire layout and interactions between the sidebar and map.